Sommaire

- 1 Introduction

- 2 The general legal framework governing the use of AI in companies: a multi-level approach

- 3 What obligations do employers face when using AI in the workplace?

- 4 AI deployer or AI provider: a particularly fragile legal boundary

- 5 What measures should companies take to properly govern the use of AI?

- 6 Conclusion

- 7 FAQ

Introduction

Artificial intelligence (AI) is rapidly spreading within companies. Task automation, decision-support tools, content generation and the optimisation of internal processes are becoming increasingly common, often driven by employees themselves in a context where AI tools are widely accessible.

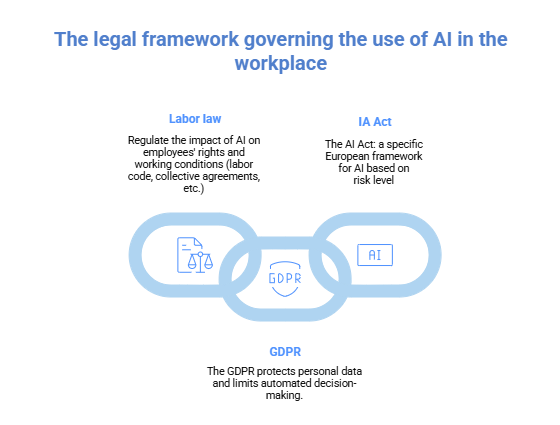

This rapid adoption may create the illusion of freedom of use. In reality, the use of AI in the workplace is subject to a dense and now clearly structured legal framework, resulting from the combined application of labour law, the General Data Protection Regulation (GDPR), collective bargaining agreements (CBAs) and, more recently, the European regulation on artificial intelligence (the AI Act).

AI is therefore neither a neutral tool nor a purely technical instrument. It cannot be freely used in the workplace whenever it affects work organisation, employees’ rights or the processing of data.

The general legal framework governing the use of AI in companies: a multi-level approach

The use of AI in the workplace is governed by an intricate set of national and European rules, which fully apply whenever an algorithmic tool influences work organisation, decision-making processes or the processing of personal data.

The AI Act: a new pillar, but not a standalone one

Regulation (EU) 2024/1689 on artificial intelligence (the AI Act), adopted in June 2024, is the first European legal framework specifically dedicated to AI. It introduces a risk-based approach to AI systems.

In particular, AI systems are classified as high-risk when they are used for:

- recruitment and candidate selection,

- employee evaluation or scoring,

- performance management,

- monitoring or control of professional behaviour.

The Regulation already imposes, for certain provisions, key obligations on employers, including:

- the prohibition of certain AI practices considered incompatible with fundamental rights;

- the obligation to ensure appropriate training for persons using AI systems in a professional context.

However, practical experience shows that the AI Act cannot be considered in isolation. It forms part of a pre-existing legal framework that continues to produce its full effects.

The GDPR: the central legal foundation of AI in the workplace

In almost all cases, AI used in companies involves the processing of personal data. The GDPR therefore constitutes the unavoidable legal entry point.

As a general rule, the employer remains the data controller, even where the AI tool is provided by a third-party service provider. In this capacity, the employer must in particular:

- define a specific, explicit and legitimate purpose;

- identify a valid legal basis;

- comply with the principles of data minimisation, proportionality and security;

- provide clear and transparent information to employees.

The French Data Protection Authority (CNIL) regularly recalls that algorithmic systems cannot escape the fundamental requirements for the protection of individuals’ rights.

The GDPR also strictly regulates decisions based solely on automated processing that produce legal or similarly significant effects. In practice, human intervention remains mandatory, particularly in recruitment, evaluation or disciplinary processes.

Labour law and collective bargaining agreements: a decisive social framework

Beyond European regulations, labour law and collective bargaining agreements (CBAs) play a central role in governing the use of AI in companies.

From a legal perspective, AI is treated as a new workplace technology. As such, its introduction triggers, in many sectors, specific obligations to inform and consult employee representative bodies.

Collective bargaining agreements frequently regulate:

- the introduction of new technologies affecting work organisation or working conditions;

- employee monitoring, surveillance or evaluation systems;

- measures likely to have an impact on employment, skills or management methods.

As a result, an AI project may comply with the GDPR and the AI Act while still being legally weakened if it fails to comply with applicable collective bargaining obligations. This level of regulation remains too often underestimated by companies.

What obligations do employers face when using AI in the workplace?

Employers may use AI to organise work, improve performance or secure internal processes, provided that they respect a fundamental principle: decision-making responsibility remains human.

AI may assist, inform or automate certain tasks, but it can never replace the employer’s responsibility, whether towards employees or supervisory authorities.

The growing use of generative AI tools also raises a specific risk: the unintentional disclosure of personal or confidential data through prompts. Such data may be processed outside the European Union or reused for model training purposes.

Employers must therefore:

- strictly regulate authorised uses;

- train employees on legal and compliance risks;

- select service providers offering strong guarantees in terms of confidentiality and security.

AI deployer or AI provider: a particularly fragile legal boundary

One of the key structural contributions of the AI Act lies in the distinction between AI deployers and AI providers.

At first glance, the distinction appears straightforward:

- the provider designs or places an AI system on the market;

- the deployer uses that system in the course of its professional activity.

In practice, the AI Act adopts a functional approach. An employer may be classified as a provider when it goes beyond a passive use of the tool, in particular when it:

- trains or retrains a model using its own internal data;

- modifies operating parameters;

- integrates the AI system into an internal decision-making process;

- combines several tools to create a proprietary algorithmic solution.

These situations are common in HR, compliance or performance-management projects and result in enhanced obligations in terms of governance, documentation and risk management.

What measures should companies take to properly govern the use of AI?

To secure the use of AI in the workplace, companies must implement clear legal and organisational governance, including in particular:

- an internal AI use policy or charter;

- precise rules governing data and prompt management;

- training programmes addressing legal and compliance risks;

- robust contractual oversight of AI service providers.

Such an approach significantly reduces litigation risks, strengthens regulatory compliance and fosters trust with employees.

Conclusion

Artificial intelligence is a powerful tool, but it cannot be used freely or without control in the workplace. Its use directly engages the employer’s liability and requires legal mastery that is as rigorous as technical expertise.

A clear strategy, combined with appropriate internal rules, is essential to reconcile innovation, performance and legal certainty. Governing AI in the workplace requires a comprehensive approach, integrating the GDPR, labour law, collective bargaining agreements, intellectual property law and the European regulation on artificial intelligence.

Dreyfus & Associés works in partnership with a global network of lawyers specialised in Intellectual Property.

Nathalie Dreyfus with the support of the entire Dreyfus firm team

FAQ

1. Is the employer responsible for decisions made by an AI system?

Yes. The employer remains fully responsible for decisions taken within the company, even where such decisions are assisted or prepared by an AI system. AI may serve as a decision-support tool, but it can never replace human responsibility, in particular in matters relating to recruitment, employee evaluation or disciplinary measures.

2. Does the AI Act replace the GDPR and labour law?

No. The AI Act does not replace either the GDPR or labour law. It supplements an existing legal framework. A single AI tool may be subject simultaneously to the AI Act, the GDPR, labour law rules and collective bargaining agreements, which requires a combined reading and a comprehensive compliance approach.

3. Can an employee refuse to use an AI tool imposed by the employer?

In principle, employees are required to comply with the employer’s instructions issued under its managerial authority. However, a refusal may be legitimate if the AI tool has not been properly disclosed to employees, if it infringes their fundamental rights, or if it has not been subject to the mandatory consultation of employee representatives. A lack of training or use contrary to data protection rules may also undermine the legitimacy of the tool.

4. What happens if an employee uses an unauthorised AI tool in the course of their work?

The use of an unauthorised AI tool may constitute a breach of professional obligations, particularly with regard to confidentiality and data security. However, any disciplinary measure requires that the applicable rules have been clearly defined, communicated to employees and remain proportionate. In the absence of an internal policy or AI charter, the employer’s disciplinary leeway is reduced.

5. Can an employee claim intellectual property rights over content generated with the assistance of AI?

Yes, but only in very limited circumstances. An employee may claim intellectual property rights over AI-assisted content only where their human contribution is creative, decisive and identifiable. In the absence of a personal intellectual contribution (creative choices, structuring, or substantial modification of the content), the output generated by AI is not protected by copyright. Furthermore, where the content is created in the course of the employee’s duties, the exploitation rights generally belong to the employer or are contractually governed.

This publication is intended to provide general guidance to the public and to highlight certain issues. It is not intended to apply to specific situations nor to constitute legal advice.